@zifehng

2017-04-24T07:14:06.000000Z

字数 4067

阅读 2932

input子系统事件处理层(evdev)的环形缓冲区

linux input evdev circular_buffer

kernel version: linux-4.9.13

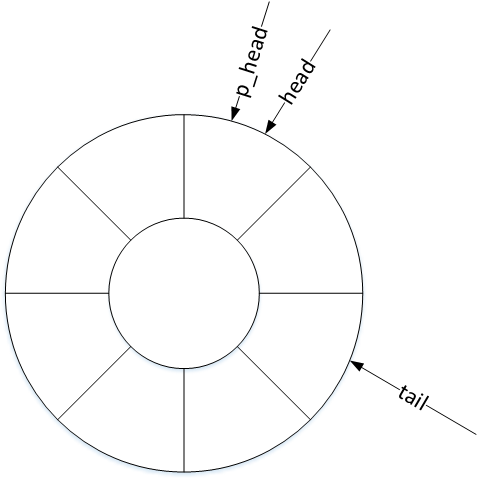

在事件处理层()中结构体evdev_client定义了一个环形缓冲区(circular buffer),其原理是用数组的方式实现了一个先进先出的循环队列(circular queue),用以缓存内核驱动上报给用户层的input_event事件。

struct evdev_client {unsigned int head; // 头指针unsigned int tail; // 尾指针unsigned int packet_head; // 包指针spinlock_t buffer_lock;struct fasync_struct *fasync;struct evdev *evdev;struct list_head node;unsigned int clk_type;bool revoked;unsigned long *evmasks[EV_CNT];unsigned int bufsize; // 循环队列大小struct input_event buffer[]; // 循环队列数组};

evdev_client对象维护了三个偏移量:head、tail以及packet_head。head、tail作为循环队列的头尾指针记录入口与出口偏移,那么包指针packet_head有什么作用呢?

packet_head

内核驱动处理一次输入,可能上报一到多个input_event事件,为表示处理完成,会在上报这些input_event事件后再上报一次同步事件。头指针head以input_event事件为单位,记录缓冲区的入口偏移量,而包指针packet_head则以“数据包”(一到多个input_event事件)为单位,记录缓冲区的入口偏移量。

1. 环形缓冲区的工作机制

- 循环队列入队算法:

head++;head &= bufsize - 1;

- 循环队列出队算法:

tail++;tail &= bufsize - 1;

- 循环队列已满条件:

head == tail

- 循环队列为空条件:

packet_head == tail

“求余”和“求与”

为解决头尾指针的上溢和下溢现象,使队列的元素空间可重复使用,一般循环队列的出入队算法都采用“求余”操作:

head = (head + 1) % bufsize; // 入队

tail = (tail + 1) % bufsize; // 出队

为避免计算代价高昂的“求余”操作,使内核运作更高效,input子系统的环形缓冲区采用了“求与”算法,这要求bufsize必须为2的幂,在后文中可以看到bufsize的值实际上是为64或者8的n倍,符合“求与”运算的要求。

2. 环形缓冲区的构造以及初始化

用户层通过open()函数打开input设备节点时,调用过程如下:

open() -> sys_open() -> evdev_open()

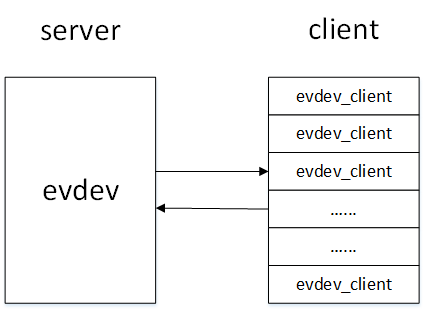

在evdev_open()函数中完成了对evdev_client对象的构造以及初始化,每一个打开input设备节点的用户都在内核中维护了一个evdev_client对象,这些evdev_client对象通过evdev_attach_client()函数注册在evdev[1]对象的内核链表上。

接下来我们具体分析evdev_open()函数:

static int evdev_open(struct inode *inode, struct file *file){struct evdev *evdev = container_of(inode->i_cdev, struct evdev, cdev);// 1.计算环形缓冲区大小bufsize以及evdev_client对象大小sizeunsigned int bufsize = evdev_compute_buffer_size(evdev->handle.dev);unsigned int size = sizeof(struct evdev_client) +bufsize * sizeof(struct input_event);struct evdev_client *client;int error;// 2. 分配内核空间client = kzalloc(size, GFP_KERNEL | __GFP_NOWARN);if (!client)client = vzalloc(size);if (!client)return -ENOMEM;client->bufsize = bufsize;spin_lock_init(&client->buffer_lock);client->evdev = evdev;// 3. 注册到内核链表evdev_attach_client(evdev, client);error = evdev_open_device(evdev);if (error)goto err_free_client;file->private_data = client;nonseekable_open(inode, file);return 0;err_free_client:evdev_detach_client(evdev, client);kvfree(client);return error;}

在evdev_open()函数中,我们看到了evdev_client对象从构造到注册到内核链表的过程,然而它是在哪里初始化的呢?其实kzalloc()函数在分配空间的同时就通过__GFP_ZERO标志做了初始化:

static inline void *kzalloc(size_t size, gfp_t flags){return kmalloc(size, flags | __GFP_ZERO);}

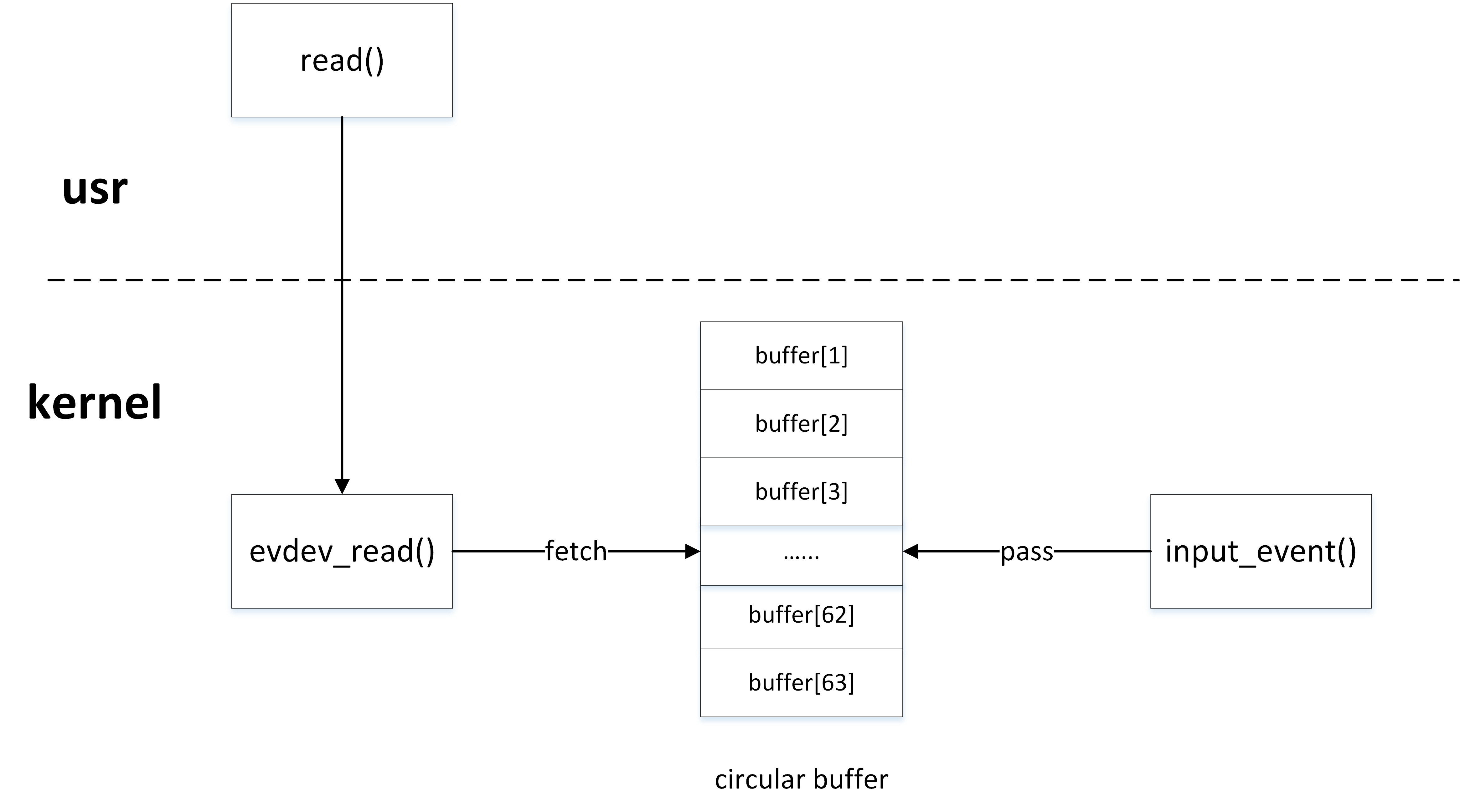

3. 生产者/消费者模型

内核驱动与用户程序就是典型的生产者/消费者模型,内核驱动产生input_event事件,然后通过input_event()函数写入环形缓冲区,用户程序通过read()函数从环形缓冲区中获取input_event事件。

3.1 环形缓冲区的生产者

内核驱动作为生产者,通过input_event()上报input_event事件时,最终调用___pass_event()函数将事件写入环形缓冲区:

static void __pass_event(struct evdev_client *client,const struct input_event *event){// 将input_event事件存入缓冲区,队头head自增指向下一个元素空间client->buffer[client->head++] = *event;client->head &= client->bufsize - 1;// 当队头head与队尾tail相等时,说明缓冲区空间已满if (unlikely(client->head == client->tail)) {/** This effectively "drops" all unconsumed events, leaving* EV_SYN/SYN_DROPPED plus the newest event in the queue.*/client->tail = (client->head - 2) & (client->bufsize - 1);client->buffer[client->tail].time = event->time;client->buffer[client->tail].type = EV_SYN;client->buffer[client->tail].code = SYN_DROPPED;client->buffer[client->tail].value = 0;client->packet_head = client->tail;}// 当遇到EV_SYN/SYN_REPORT同步事件时,packet_head移动到队头head位置if (event->type == EV_SYN && event->code == SYN_REPORT) {client->packet_head = client->head;kill_fasync(&client->fasync, SIGIO, POLL_IN);}}

3.2 环形缓冲区的消费者

用户程序作为消费者,通过read()函数读取input设备节点时,最终在内核调用evdev_fetch_next_event()函数从环形缓冲区中读取input_event事件:

static int evdev_fetch_next_event(struct evdev_client *client,struct input_event *event){int have_event;spin_lock_irq(&client->buffer_lock);// 判缓冲区中是否有input_event事件have_event = client->packet_head != client->tail;if (have_event) {// 从缓冲区中读取一次input_event事件,队尾tail自增指向下一个元素空间*event = client->buffer[client->tail++];client->tail &= client->bufsize - 1;if (client->use_wake_lock &&client->packet_head == client->tail)wake_unlock(&client->wake_lock);}spin_unlock_irq(&client->buffer_lock);return have_event;}